Good Practice Study on Principles for Indicator Development, Selection, and Use in Climate Change Adaptation Monitoring and Evaluation

Report Summary

The Study’s Structure

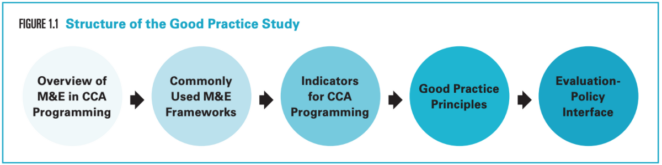

The study first looks at M&E for CCA in a broader context to see what the key challenges are (chapter 2), and how M&E is being applied in the adaptation field (chapter 3). It reviews the types of adaptation indicators that are commonly used (chapter 4), and then moves into a narrower discussion of what practitioners need to consider when developing better, more useful indicators. It next documents good practice principles that help define indicators for adaptation interventions (chapter 5). Finally it looks at how the evaluation-policy interface can support better adaptation policies, and if good practice principles can inform greater uptake of evaluation results as evidence in policy making (chapter 6).

In the following sections of the blog I will primarily focus on some of the information brought forward in the chapter summaries. And though precise, these summaries provide only a little taster of the depth and breadth of the topics discussed within the study report.

This weADAPT article is an abridged version of the original text, which can be downloaded from the right-hand column. Please access the original text for research purposes, full references, or to quote text.

The Current Discourse

M&E has a central role in identifying future adaptation pathways and developing an evidence base for future projects, programs, and policies. The wide range and complexity of adaptation M&E challenges require that practitioners identify them at the onset of CCA programming. Challenges include grounding M&E systems across temporal and spatial scales, the complexity of determinants and influences (attribution gap), a lack of conceptual clarity on terminology, the uncertainty of climate change and climate variation, dealing with counterfactual scenarios, and mitigating maladaptation.

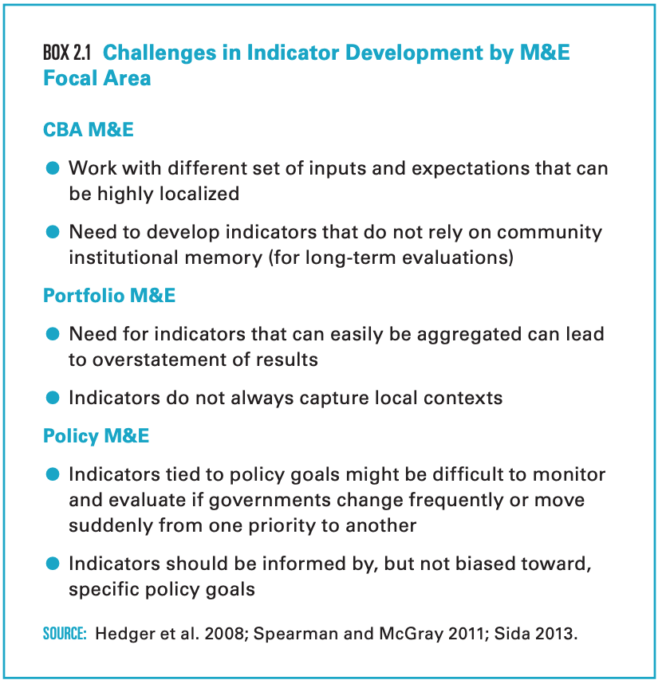

CCA M&E systems can be grouped in one of three focal areas: CBA, portfolio, and policy. Each focus has distinct characteristics and indicator challenges, though in practice these groupings will not be treated in isolation of one another.

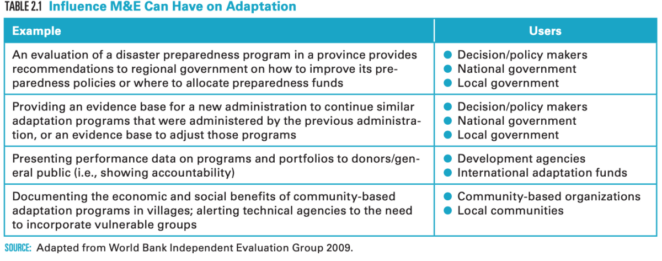

Evaluation use should govern the M&E context, and a client perspective should be taken when framing the M&E system. Who will be the end users of the information? How will the information be used? Answering these questions can increase the likelihood of evaluations being used for adaptive learning.

Commonly Used Frameworks

M&E frameworks are often developed with a specific spatial scale in mind, informed by the organization’s focus, though with upward or downward linkages to other scales. Indicators are tailored to the M&E frameworks’ use. For example, some top-down frameworks of multilateral funds and institutions make use of more quantifiable indicators, predetermined core indicators, and scorecards that can be easily aggregated to the portfolio level. Bottom-up frameworks with a focus on CBA provide more space for the use of more qualitative indicators and the development of local, context-specific indicator sets.

M&E frameworks vary in the ways they group indicators. For example, UNDP categorizes indicators according to coverage, impact, sustainability, and replicability; Spearman and McGray (2011) and Olivier, Leiter, and Linke (2013) focus on three broad dimensions (adaptive capacity, adaptation action, and sustained development). The TANGO framework, on the other hand (Frankenberger et al. 2013b), focuses on adaptation capacities (absorptive capacity, adaptive capacity, and transformative capacity).

Many frameworks stress the need for practitioners to critically reflect on the process of indicator development. Broad reflective questions are provided in many examples, but most frameworks fall short of providing clear good practice principles of indicator development and selection.

Adaptation Indicators: Purpose and Classifications

Indicators underpin an M&E system and provide information on change arising from interventions. They can be used as an accountability tool (measuring achievements and reporting on them), a management tool (tracking performance, providing data to steer interventions) and as a learning tool (providing evidence on what works and why).

The most frequently used types of adaptation indicators – quantitative, qualitative, behavioral, economic, process, and output/outcome – do not differ from those found in development programming. Where they do differ is in how they are combined to measure contribution and impact.

Indicators can be grouped into various classifications. The most common grouping in adaptation is based on a logical framework composed of output/outcome, process, and impact indicators. Emerging types of indicator classifications include those that focus on the dimension of the adaptation activity, and the capacities that foster true resilience.

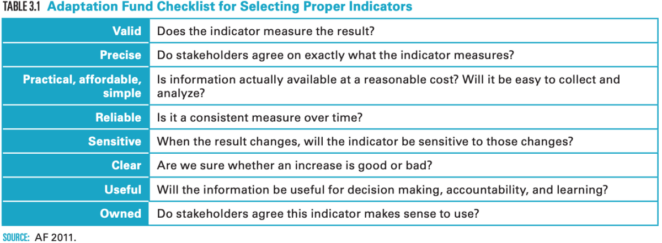

Good Practice Principles for Indicators

Chapter 5 provides guidance to inform the process of indicator development and selection with principles categorized by their position in the project cycle, and poses questions in a series of tables correlated to each stage of the project cycle to help guide thinking toward implementation of these principles. Any attempt to further summarize the already concise tables presented in this chapter would result in missing important elements regarding the good practice principles. As such I would like to refer to the chapter and will provide a limited number of takeaways below.

Given the local contextualization of climate impacts, adaptation lends itself well for local stakeholder consultation and other participatory processes. These processes should focus on the development of the intervention, the logframe and theory of change, and M&E system; indicator development and selection; and data collection. This participatory approach helps to capture both the local context as well as the wider enabling environment.

Good indicators are not carved in stone and are never a substitute for thoughtful analysis and interpretation. Given the dynamism and uncertainty as to how climate change will exactly play out at the local level, there needs to be a certain flexibility and openness to changing indicators developed at the start of the project when the actual climate reality changes.

Good Practice Principles toward Better Evaluation Utilization in Policy Making

A good number of good practice principles are put forward in chapter 6 and I will not delve into those in this blog post.

Evaluative evidence remains underutilized in policy making, and we would like to urge evaluations with no specific policy-making aim to still consider their policy-making relevance and, if relevant, provide reflection on how the evaluation’s findings can inform policy-making.

Collaborative evidence-based policy development is the healthiest relationship between evaluator and policy maker, with both assuming an equal interest in supporting evaluations as evidence in the policy-making process. The difference between this and evidence-based policy making is that the evaluator’s role goes beyond providing evaluative information to also assuming responsibility for actively supporting different steps in the policy process.

Conclusion

As this study has shown, indicators are tailored to the M&E frameworks’ purpose; multilateral funds and development agencies mostly use top-down frameworks which include more quantifiable indicators, predetermined core indicators, and scorecards that can be easily aggregated. Interventions that focus on CBA use bottom-up M&E frameworks, participatory engagement, and qualitative indicators. As the CCA M&E field advances, more and more M&E frameworks are taking a two-tier approach, in which top-down and bottom-up approaches (including indicators) mutually reinforce one another.

One of the main conclusions of the study is that there is no single set of universal or standard adaptation indicators. Providing examples of indicators that can be useful in adaptation programming will not contribute to advancing the field. Thus, good practice principles for selecting, developing, and using CCA indicators have been proposed.

Another conclusion is that the direct link between indicators and their role in evidence-based policy making in adaptation is a thin one. There are few or no examples of adaptation policy making that has been guided by indicators per se. The data from indicators are channeled into the overall knowledge base that is needed to inform policy making. Additionally, there is a practice gap in using evaluative evidence to inform climate change adaptation policy making.

We hope this study will enrich the conversation on what constitutes ‘good’ indicators for adaptation, and will give practitioners a better understanding of how to develop and use indicator sets within the complexity and dynamism that is inherent to climate change adaptation.

Recommended citation

Leagnavar, P., Bours, D., and McGinn, C., 2015. Good Practice Study on Principles for Indicator Development, Selection, and Use in Climate Change Adaptation Monitoring and Evaluation. A. Viggh Ed. Washington, DC: Climate-Eval Community of Practice and the Global Environment Facility’s Independent Evaluation Office (GEF IEO).

(0) Comments

There is no content